What Is an Image?

Image capture, composition and basic analysis

Session 2 of the Fundamentals of Microscopy workshop 2026

Ved Sharma, PhD

vsharma01@rockefeller.edu

Bio-Imaging Resource Center, The Rockefeller University

February 26, 2026

Fundamentals of Microscopy workshop - Session 2 What Is an Image? Image capture, composition and basic analysis

Ved Sharma, PhD

Advanced Image Analyst Bio-Imaging Resource Center, The Rockefeller University

![]()

Image Analysis @ Bio-Imaging Resource Center

https://imageanalysis-rockefelleruniversity.github.io/

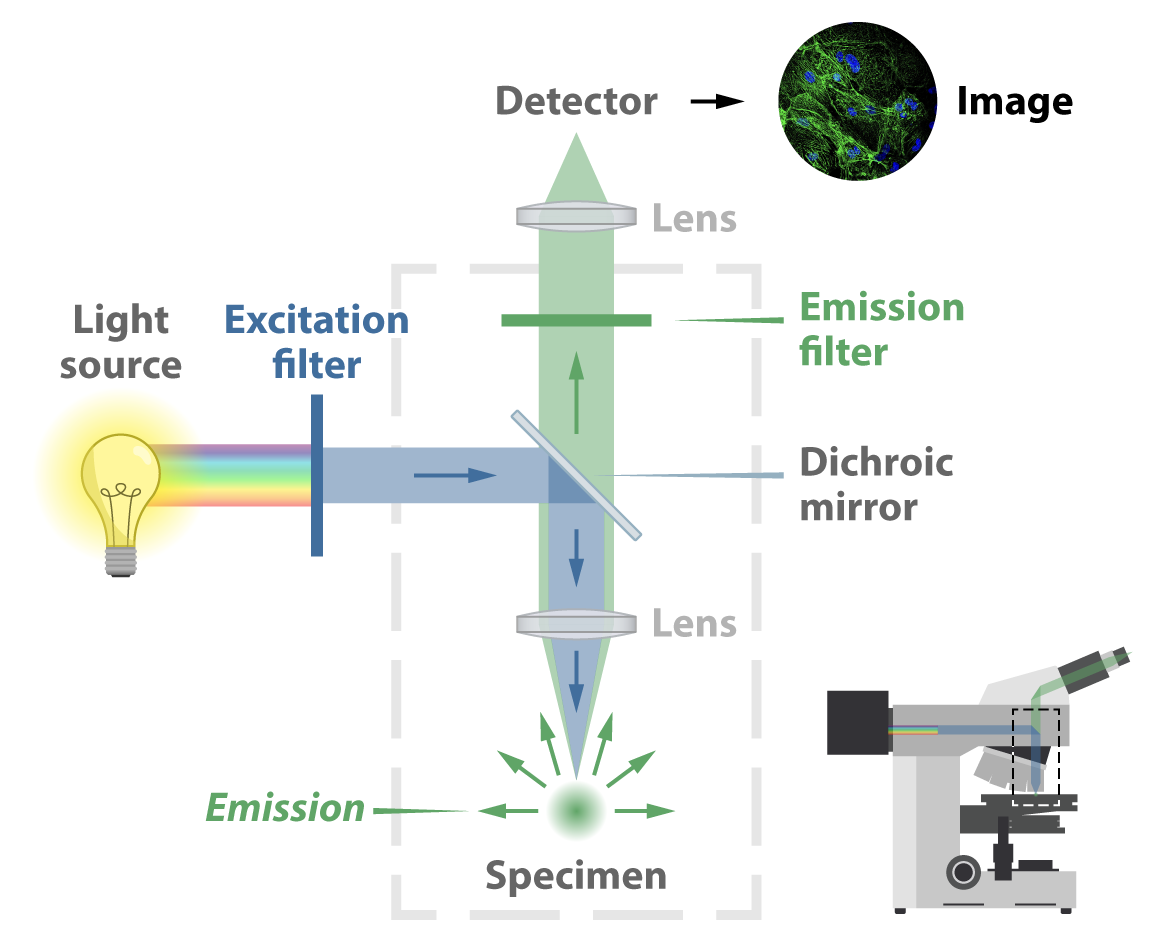

How are images formed in a microscope

https://morgridge.org/feature/fluorescence-imaging-primer/

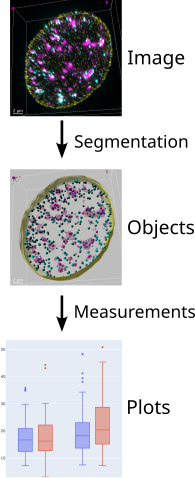

Image Analysis workflow

page 1 drawing from /mnt/bircdata08/home-birc/BIRC/OMIBS Course Binder/08_16_2024_Friday/Luke Lavis/OMIBS_2024_Lavis_Lecture_1.pdf

noise, FFT, PSF: OMIBS_LaRiviere2024.pdf PSF: PSF Quality OMIBS 2024 Turnbull v2.pdf

Confocal detectors

Detectors can be cameras or confocal-style detectors such as:

- PMTs (photomultiplier tubes)

- GaAsP

- APDs (Avalanche Photodiodes)

- SilVIR

… will be discussed in session #4 on Confocal Microscopy

Outline

Part 1 - How cameras generate an image.

- camera types (CCD, EMCCD, CMOS)

- camera noise

Part 2 - What is an image and how to work with it.

- bit depth

- dynamic range

- metadata

- histogram

- display settings (Lookup tables, brightness/contrast)

- Noise reduction (deconvolution vs AI-denoising)

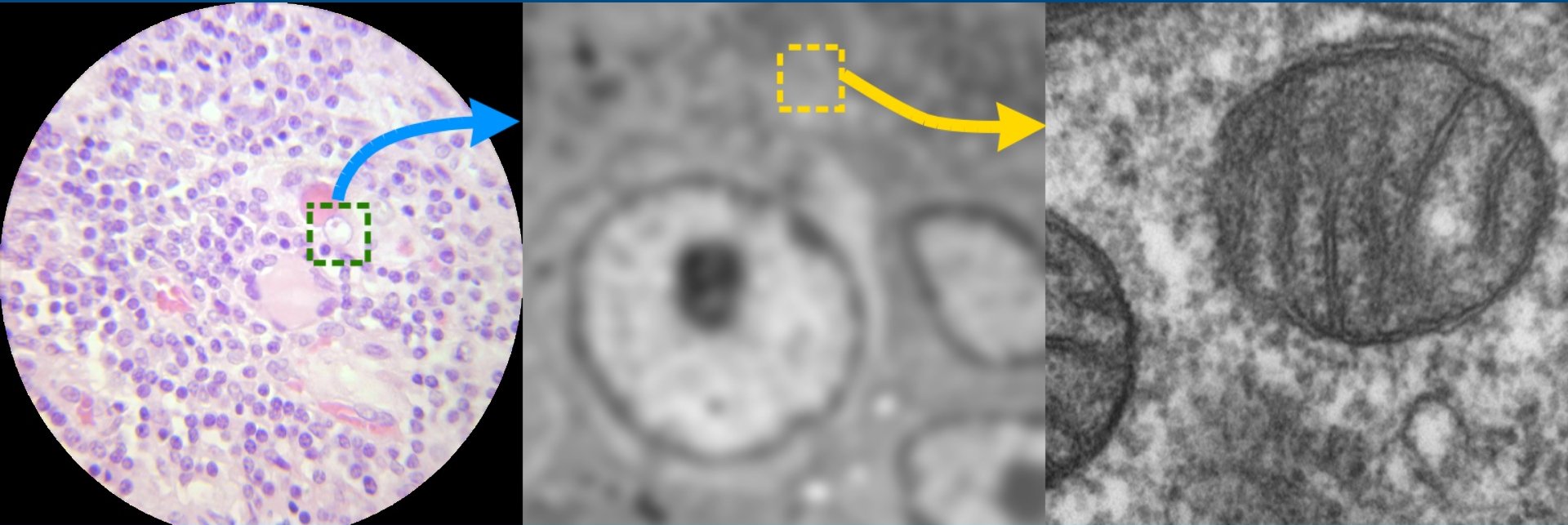

Microscopy cameras

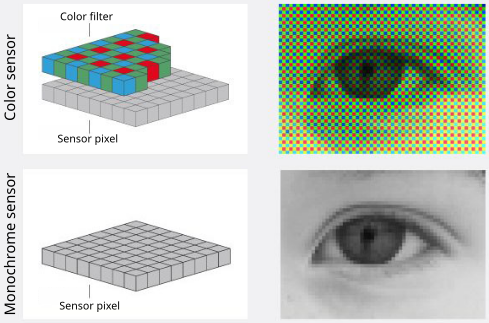

Color cameras

- used for visual inspection

- used for stains and dyes (e.g. histology)

Monochrome cameras

- for fluorescence imaging

- higher sensitivity than color cameras

- used with emission filters to capture specific wavelength ranges

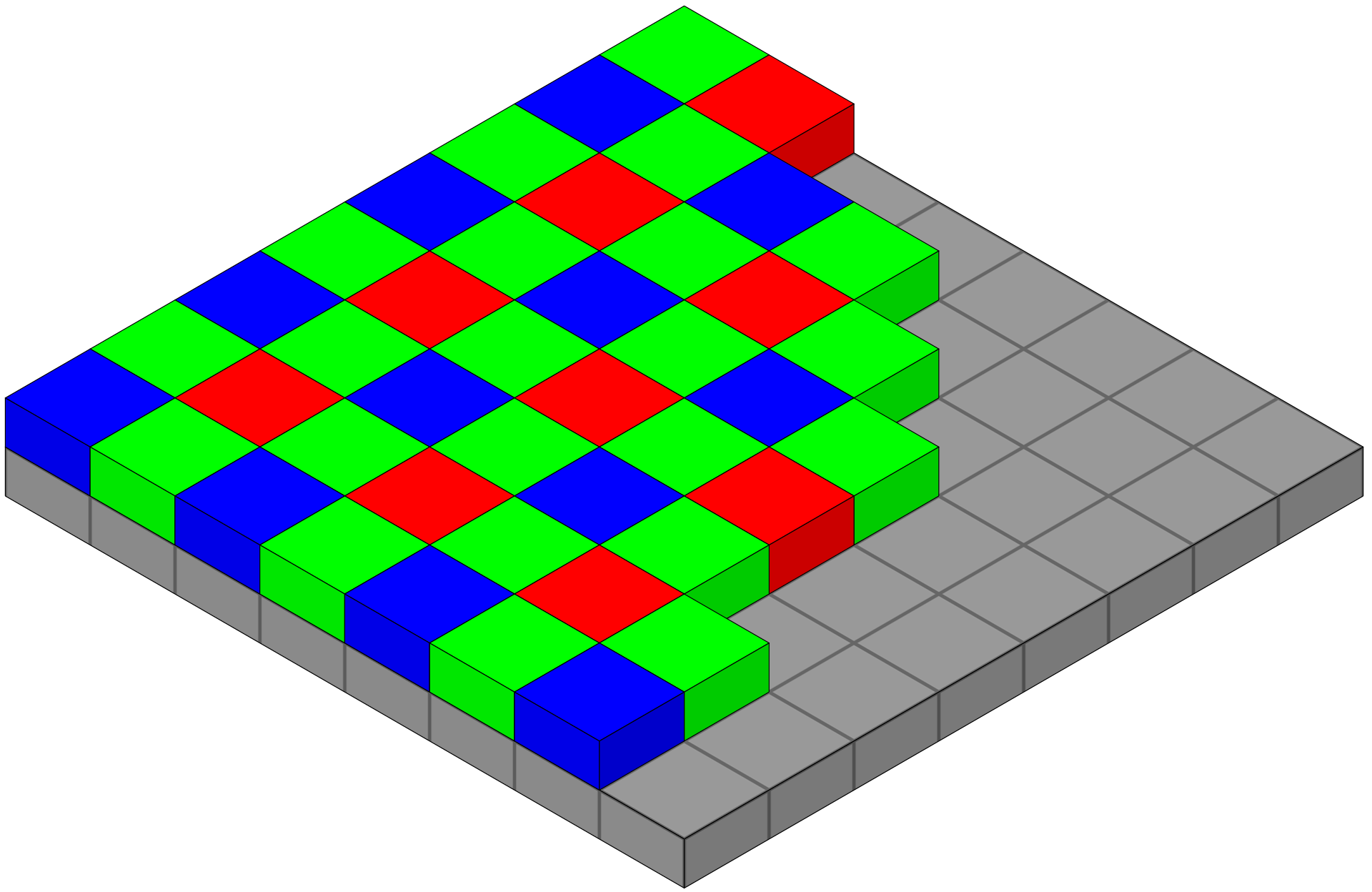

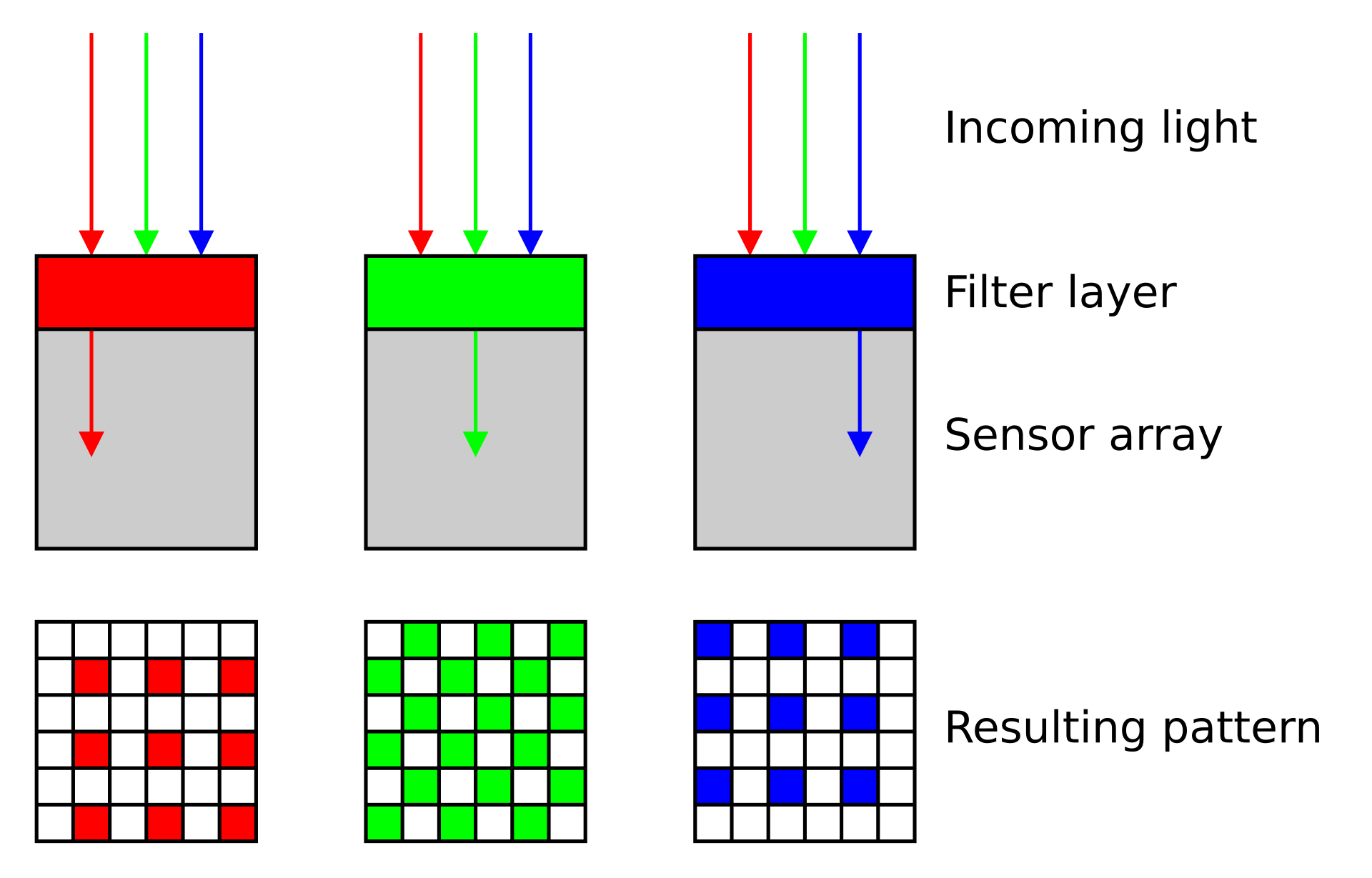

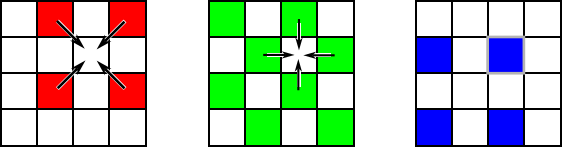

Color camera - Bayer filter array (BFA)

- Bayer filter array (BFA) is a color filter pattern to capture color information.

- It consists of a grid of red, green, and blue filters arranged in a specific pattern over the camera’s sensor.

- Each pixel on the sensor captures light filtered through one of these colored filters

- Interpolation of neighboring pixels generates a smooth representation of a colored field of view.

Color camera - limitations

- Each pixel effectively captures only 1/3 of incoming light, leading to reduced sensitivity compared to monochrome cameras

- The color information is not directly captured but rather inferred through interpolation, so cannot accurately extract the precise intensity values for further image analysis.

Monochrome camera

- Each pixel captures the full intensity of incoming light, resulting in higher sensitivity and better signal-to-noise ratio (SNR) compared to color cameras.

- These cameras are used for fluorescence imaging and quantitative analysis, as they provide more accurate intensity measurements without the need for interpolation.

- Used in combinations with dichroic mirrors and emission filters to capture specific wavelength ranges, allowing for multi-channel imaging and analysis of different fluorophores.

- Used in transmitted-light techniques for better contrast and resolution.

- Used in scientific research and applications where accurate intensity measurements are critical, such as in cell biology, neuroscience, and materials science.

Camera types

- CCD (Charge-Coupled Device)

- EMCCD (Electron Multiplying CCD)

- sCMOS (scientific Complementary Metal-Oxide-Semiconductor)

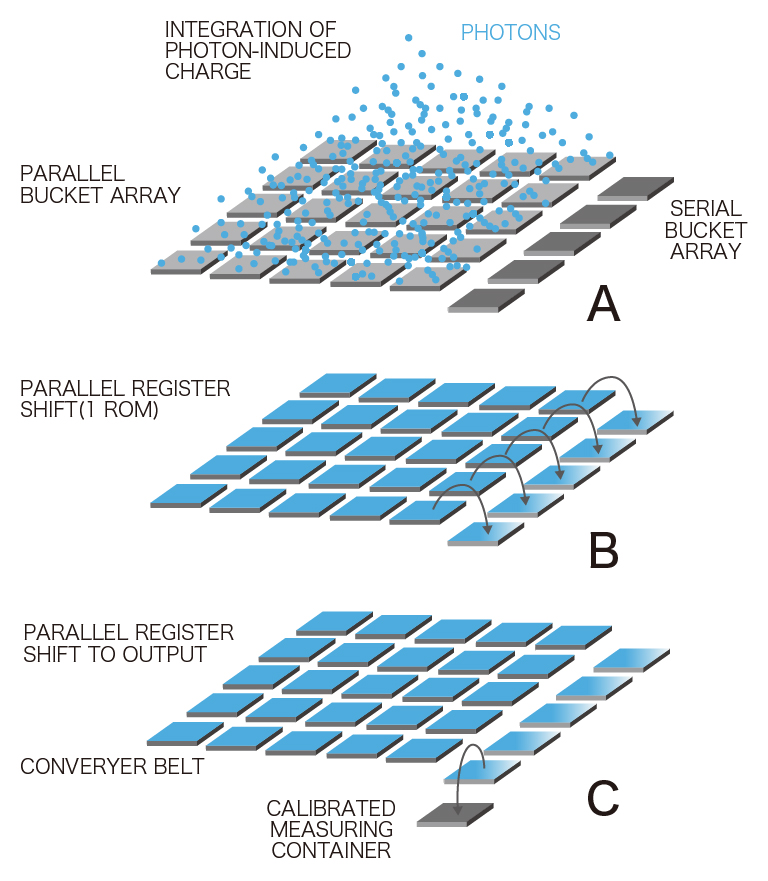

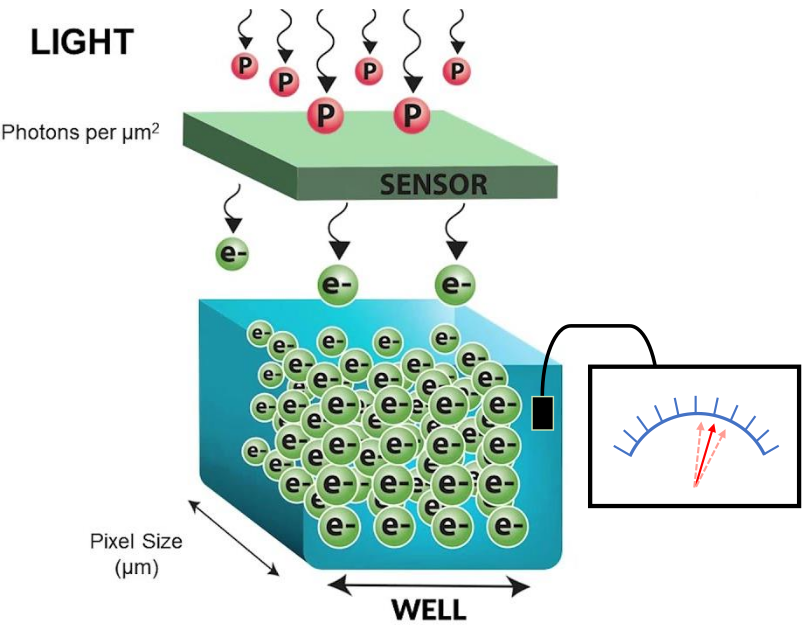

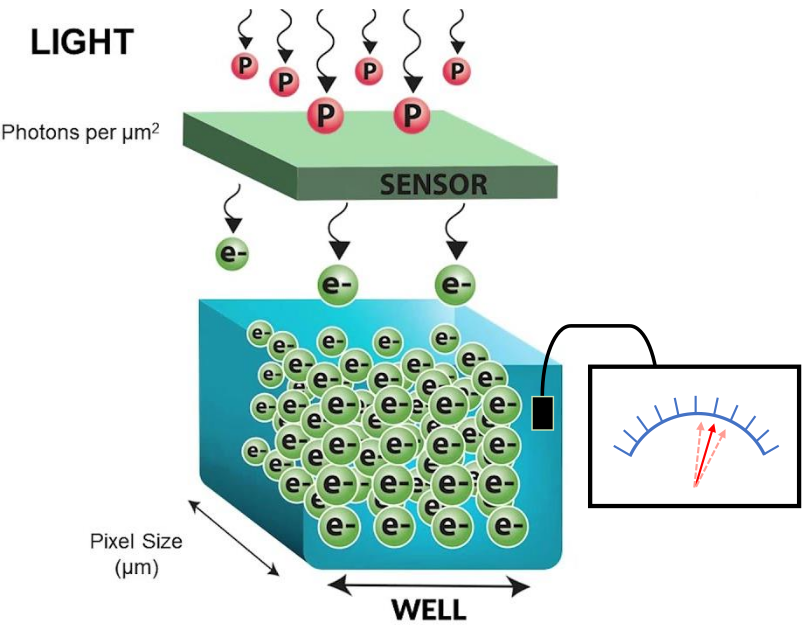

CCD camera

from https://camera.hamamatsu.com

- Each pixel of the CCD image sensor is composed of a photodiode and a potential well, which can be thought of as a bucket for photoelectrons.

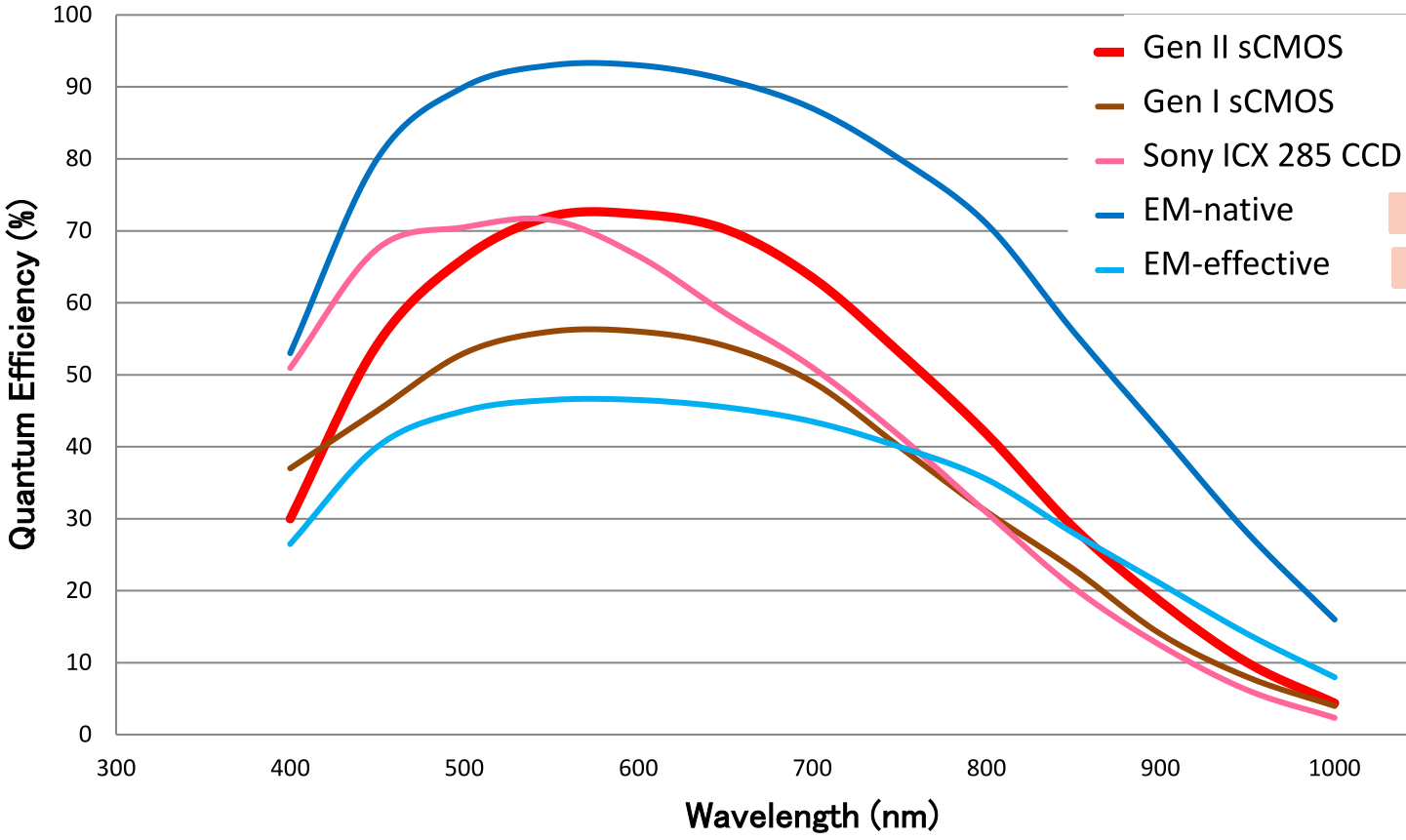

- This wavelength dependent conversion of light to photoelectrons is specified as the quantum efficiency (QE).

- Photoelectrons accumulate in each bucket until it’s time for readout, when all of the photoelectrons are relayed from one bucket to the next down each row of pixels.

- The charge is gathered pixel-by-pixel—serially—into a container at the end of the relay.

- Once in the container, the photoelectrons are converted into voltage and processed into an image on the camera circuit board.

- Because the photoelectrons are converted into signal (voltage) at a common port, the speed of image acquisition is limited.

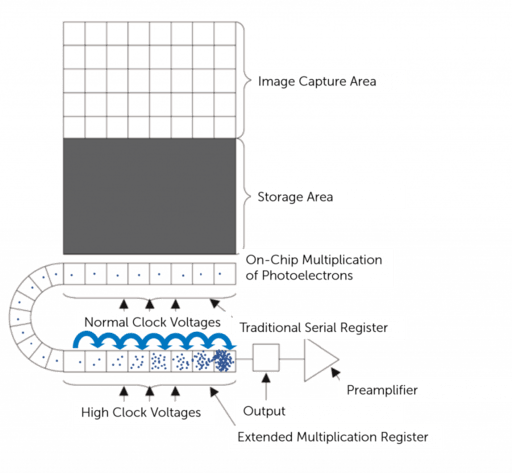

EMCCD camera

https://www.teledynevisionsolutions.com

- Similar to CCD except that they have an on-chip electron multiplication register that amplifies the signal before readout, allowing for detection of very low light levels.

- Because the photoelectrons are converted into signal (voltage) at a common port, the speed of image acquisition is limited.

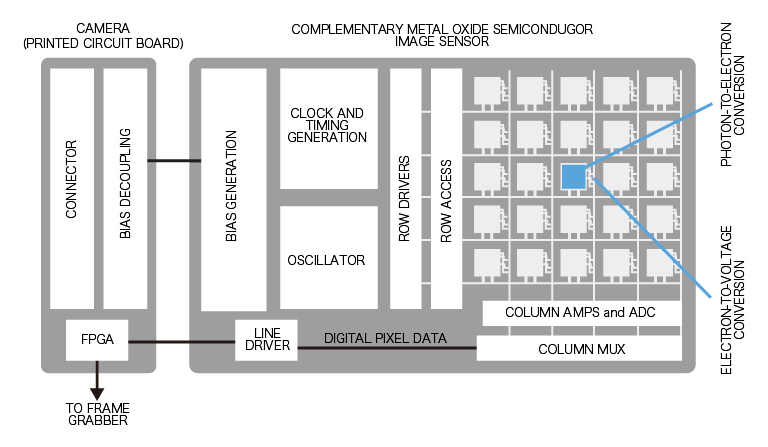

sCMOS camera

https://camera.hamamatsu.com

- In contrast with CCD and EMCCD sensors, each pixel of a CMOS image sensor is composed of a photodiode-amplifier pair.

- Unlike a CCD sensor, photoelectrons are converted into voltage by each pixel’s photodiode-amplifier pair.

- Because conversion to voltage happens in parallel instead of serially (CCD), image acquisition can be much faster for CMOS sensors.

- scientific CMOS sensors combines high QE with fast frame rates and low noise, which translates into high speed, high-resolution biological images, even in low light situations.

- For almost all applications, newer sCMOS cameras are a great choice, but for ultra-low light (single fluorescent molecules) EMCCDs may still be better.

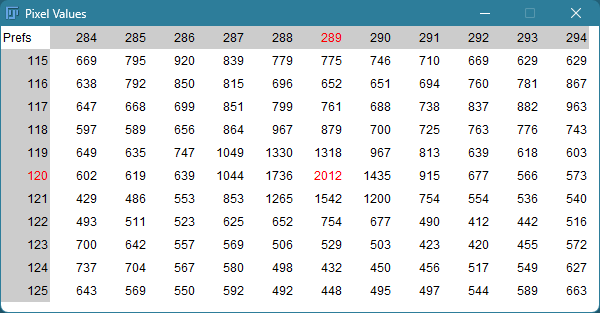

Camera noise

Random degradation of any image due to the inherent uncertainty of photon detection.

Mainly three types of noises in microscopy cameras:

- Shot noise

- Dark noise

- Read noise

Shot noise

- Uncertainty in the arrival of photons

- Arrival of any given photon is independent and cannot be precisely predicted

- The probability of its arrival is governed by a Poisson distribution

- It is most apparent at low signal levels, where the number of detected photons is small

- Shot noise can be reduced by collecting more photons, either with longer exposure times or by combining multiple frames, but this may not always be feasible due to photobleaching or phototoxicity in live samples.

Dark noise

- Uncertainty in the photon-to-electron conversion process.

- Generated by thermal electrons in the camera sensor even in the absence of light.

- Also governed by a Poisson distribution.

- Becomes a problem for long exposure such as in bioluminescence imaging.

- Temperature-dependent: can be reduced by cooling the camera sensor.

Read noise

Resource

https://andor.oxinst.com/learning/view/article/sensitivity-and-noise-of-ccd-emccd-and-scmos-sensors

- Uncertainty in the electronic readout process of the camera

- Inherent to the process of converting CCD charge into a voltage signal and the subsequent analog-to-digital conversion

- added uniformly to all pixels

- Can be reduced by using a camera with low read noise specifications and optimizing the readout settings

- Read noise is negligible in high signal applications

- EMCCD: signal amplification happens before readout, so read noise is effectively reduced to near zero, making EMCCD ideal for low-light imaging.

| CCD | EMCCD | sCMOS | |

|---|---|---|---|

| Read noise | 2-6 e- | <1 e- | 1-2 e- |

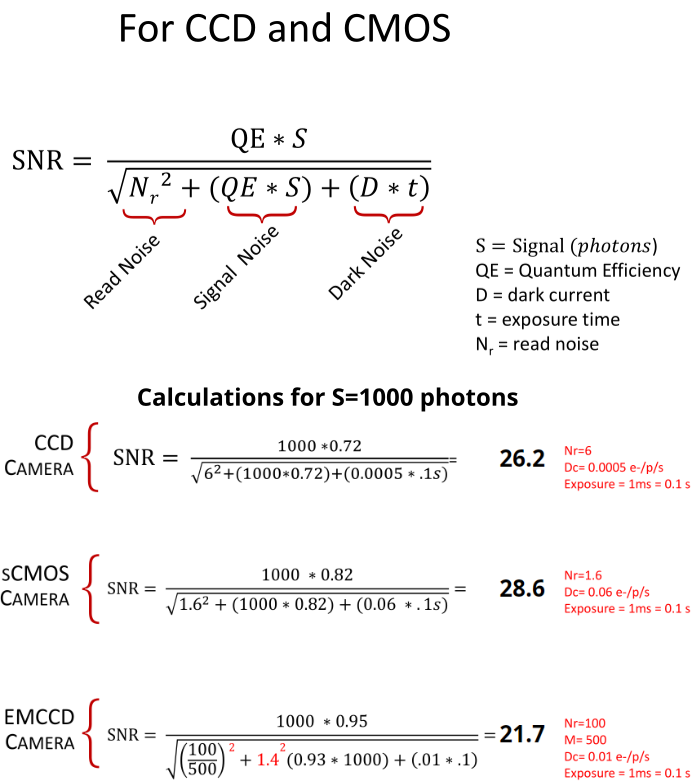

Signal-to-noise ratio (SNR)

- SNR measures the strength of the signal relative to the noise in an image.

- SNR is dependent on the input light levels and camera specifications (QE, various sources of noise etc.)

- Noise is not the same as background

- Noise: random corruption of any image due to inherent uncertainty of photon detection

- Background: any detected signal that is biologically non-informative

Source: Hamamatsu white paper

Source: Hamamatsu white paper

Resource

Checkout SNR of different cameras under various conditions:

https://www.hamamatsu.com/sp/sys/en/camera_simulator/index.html

Signal-to-background ratio (SBR)

- SBR is a measure of signal strength relative to background signal.

- Sample dependent, not camera dependent.

- Higher SBR indicates better contrast between the object of interest and the background.

- SBR can be improved by using specific stains, optimizing imaging conditions, and applying image processing techniques.

Resources - camera websites

Part 2

What is an image and how to analyze it.

Why should I learn about images and analysis in an era of AI and Deep Learning?

https://forum.image.sc/

Images as pixels

Sharma et al. 2021, PMID: 34205257

- Images are composed of a grid of pixels

- Each pixel contains intensity information

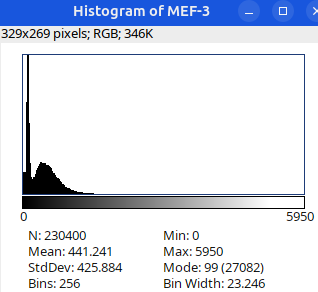

Bit depth

- Number of available grey levels used the represent the signal intensity

- Determines how “finely” the signal is slices into discrete intensity levels

- Number of bits (N) determine the range of intensity levels

| Image Type | Range of intensity levels (0 to 2N-1) |

|---|---|

| 8-bit | 0-255 |

| 16-bit | 0-4095 |

| 32-bit | 0-65,535 |

| RGB color (3 x 8 bits) | 0-255 per channel |

| Bit depth (N) | # shades (2N) | Lookup table (LUT) |

|---|---|---|

| 1 | 2 |  |

| 2 | 4 |  |

| 3 | 8 |  |

| 4 | 16 |  |

| 5 | 32 |  |

| 6 | 64 |  |

| 7 | 128 |  |

| 8 | 256 |  |

Dynamic range vs bit depth

Dynamic range is the ratio between the maximum and minimum signals that can be measured by the camera

\[\text{Dynamic Range} = \frac{\text{Full Well Capacity}}{\text{Read Noise}}\]

- Full well capacity is the maximum number of electrons a sensor can hold before saturation

- Read noise is the minimum detectable signal above the noise floor

Dynamic range is about the sensor’s ability to capture extreme differences in light.

Bit depth is about the digital resolution used to display those differences.

Analogy

- Think of Dynamic Range as the total height of a staircase (from the floor to the ceiling).

- Think of Bit Depth as the number of steps in that staircase.

- A higher bit depth means more steps, making the transition from floor to ceiling smoother, but it doesn’t necessarily make the ceiling any higher.

Resources

https://www.teledynevisionsolutions.com/learn/learning-center/imaging-fundamentals/bit-depth-full-well-and-dynamic-range/

How do grey intensity values relate to photons

Resources

https://www.teledynevisionsolutions.com/learn/learning-center/imaging-fundamentals/camera-gain/

https://www.teledynevisionsolutions.com/learn/learning-center/imaging-fundamentals/camera-sensitivity/

https://www.azom.com/article.aspx?ArticleID=20215

Intensity values/grey levels/Analog-to-Digital Units (ADU) are proportional to the number of photons detected by the camera sensor

- Convert grey levels to electrons \[\text{Photoelectrons} = (\text{Grey levels} - \text{Offset}) \times \text{Gain}\]

- Convert electrons to photons \[\text{Photons} = \frac{\text{Photoelectrons}}{\text{QE}}\]

need to know the camera offset, gain and quantum efficiency (QE) of the camera sensor for the specific wavelength

Image display vs image analysis

Image display

- Images are usually enhanced for better visualization (human eye) of features of interest

- qualitative assessment of the features of interest in the image

- typically figure panels for publications, presentations etc.

- Tools:

- brightness/contrast

- Lookup table (LUT)

- Converting image (16/32 bit) to RGB

Image display vs image analysis

Image analysis

- quantitative measurement of features of interest

- Cell/nuclei counting, fluorescence intensity, shape etc.

- It’s best to avoid any photo editing software (Photoshop, GIMP etc.)

- Any visual enhancement (pixel value change) is prohibited, except…

- Caveats: certain image enhancements are allowed:

- image processing filters (e.g. Gaussian blur, median filter, gamma etc.). Original image must be used for intensity measurement.

- deconvolution

- make sure to apply the same image processing to all the images in a dataset, including controls and experimental groups, to avoid bias

- All image processing steps should be properly described in the methods section and/or figure legend of the paper.

Resource: preparing images and describing image analyses for publication

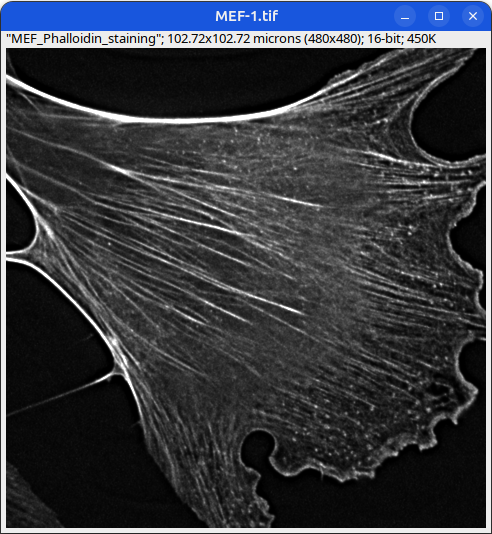

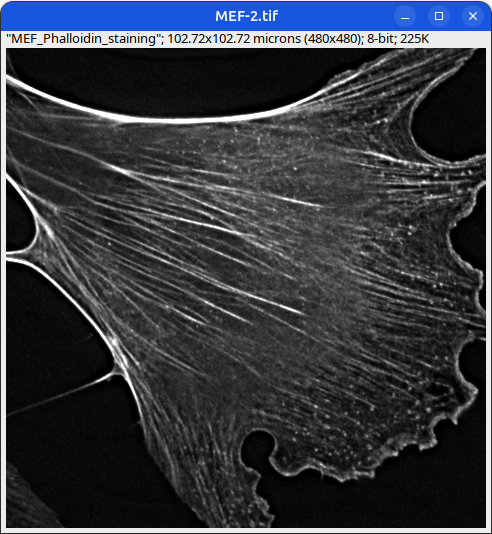

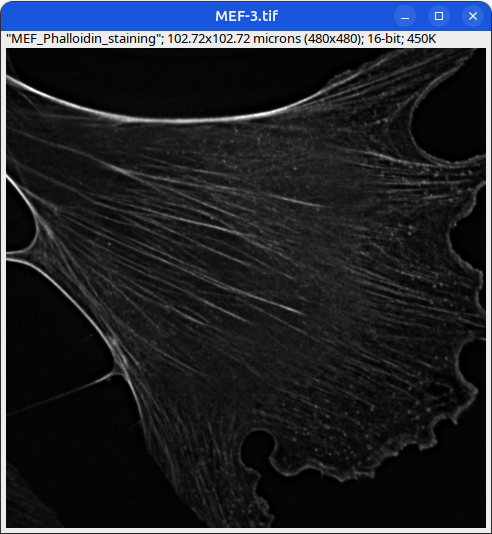

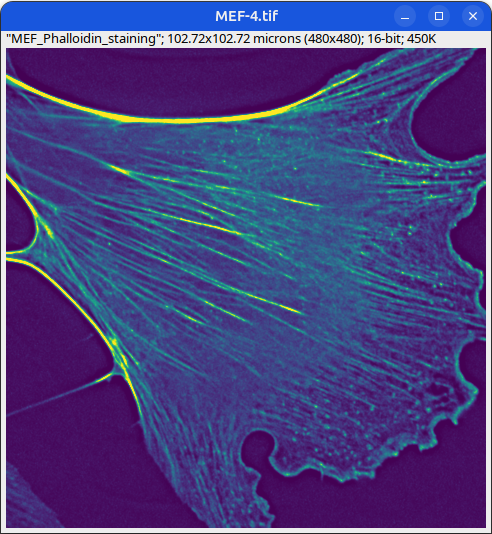

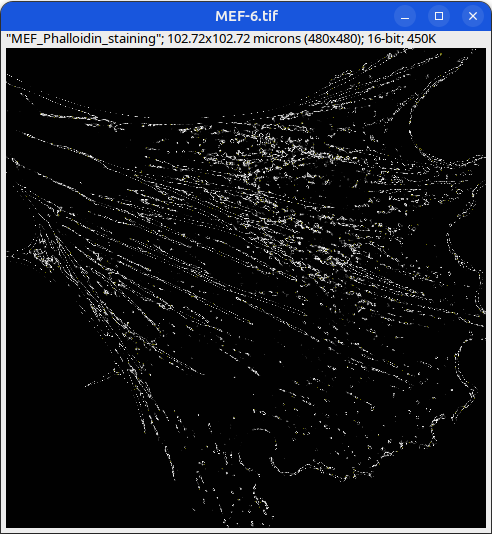

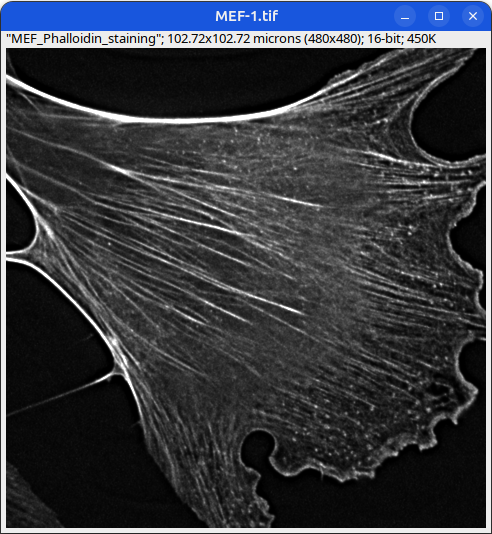

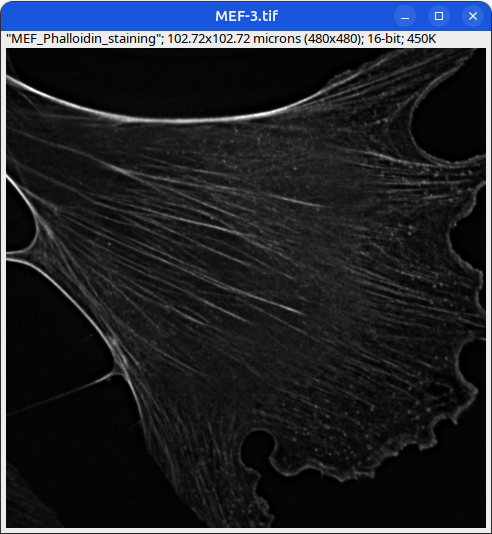

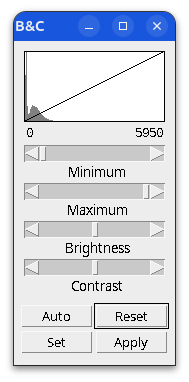

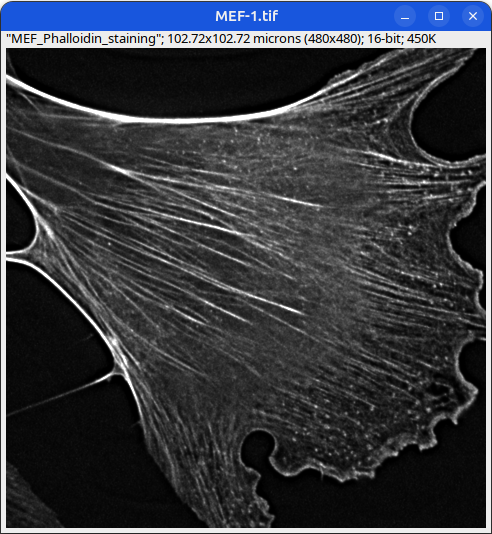

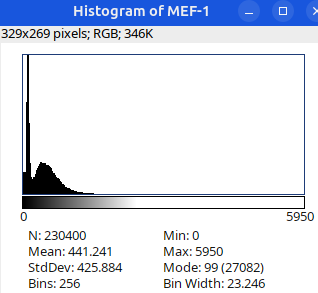

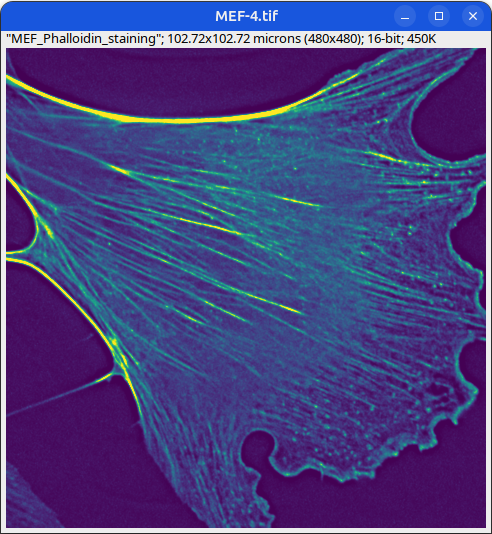

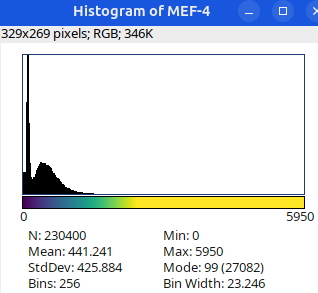

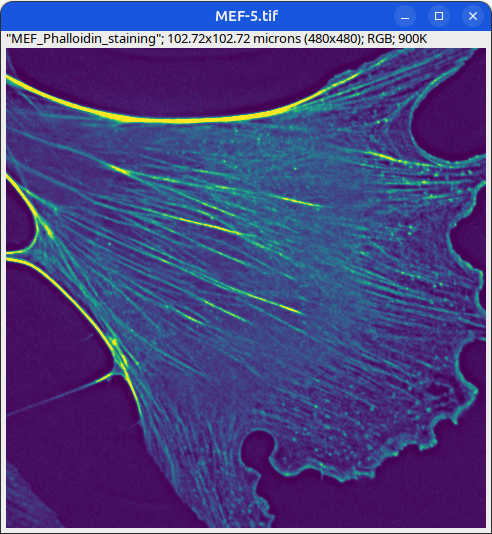

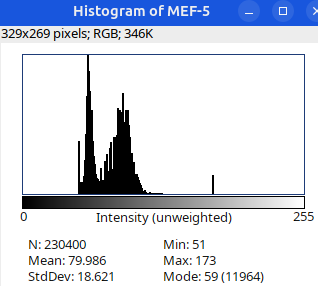

Can you tell which images are the same

and which are different?

Brightness and contrast settings are different for the two images, but the pixel values are identical.

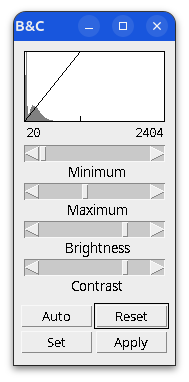

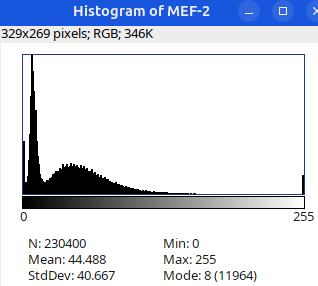

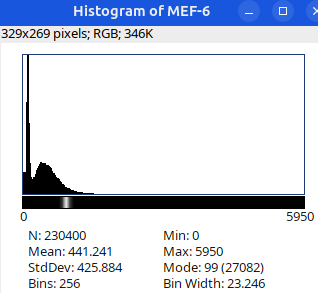

Next: let’s checkout the histograms of all 6 images. In Fiji, go to: Analyze > Histogram (or Press H)

Image display vs image analysis

Images that look the same may have different pixel values.

Images that look different may have identical pixel values.

When in doubt, check:

- Histogram

- Brightness and Contrast

- Lookup table (LUT)

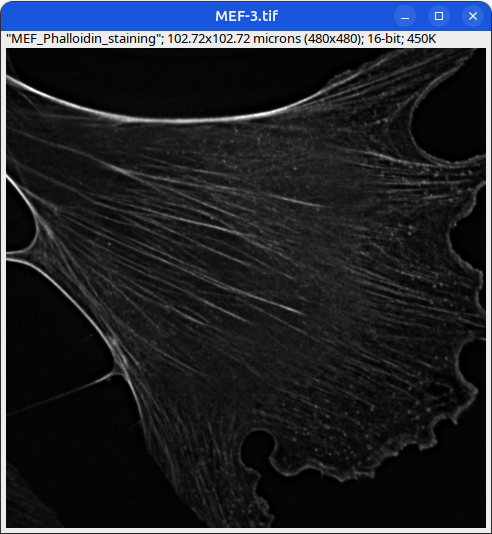

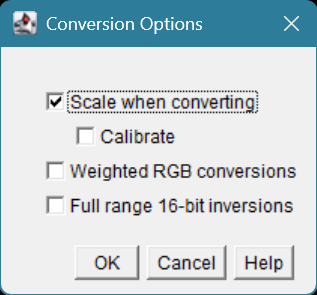

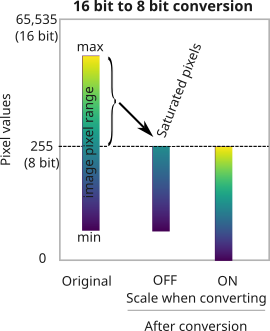

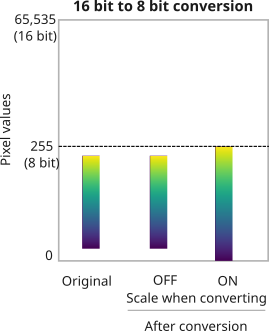

Changing image bit-depth

Why would you want to change the bit-depth of an image?

- to save space (rarely)

- Because a particular ImageJ/Fiji plugin only works with 8/16/32-bit images (most common reason!)

- so that a large image could be completely loaded into RAM for quick visualization.

in Fiji, go to Edit > Options > Conversions...

By default this setting is checked ON.

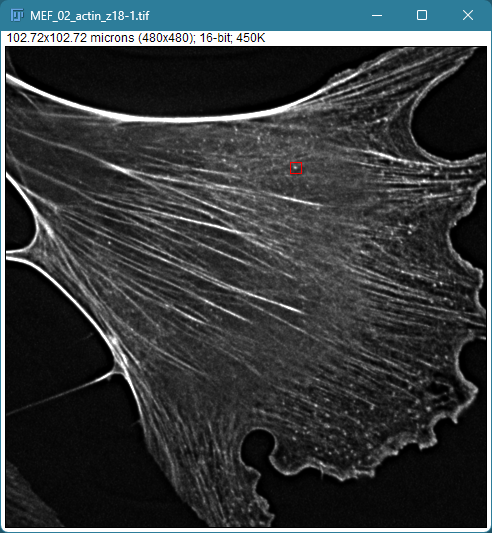

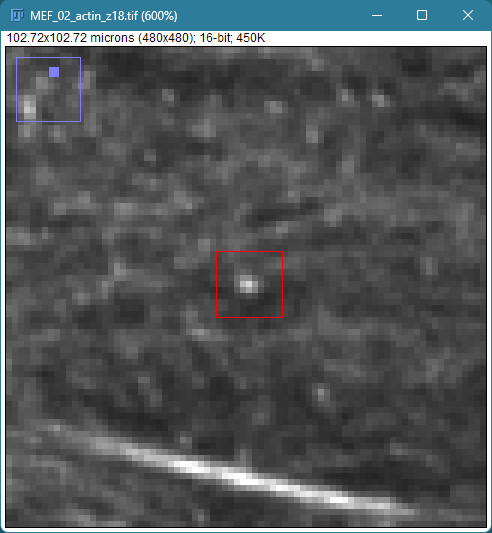

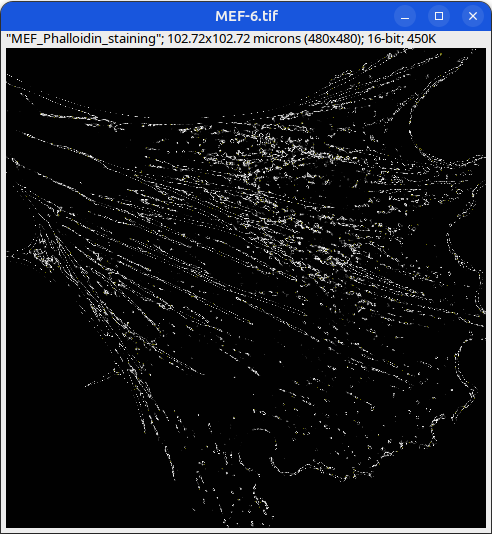

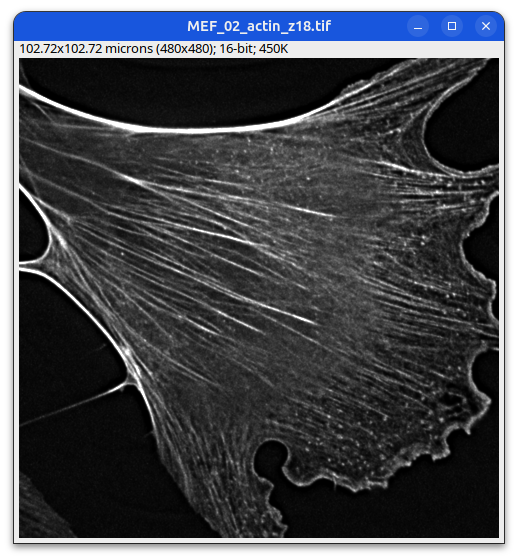

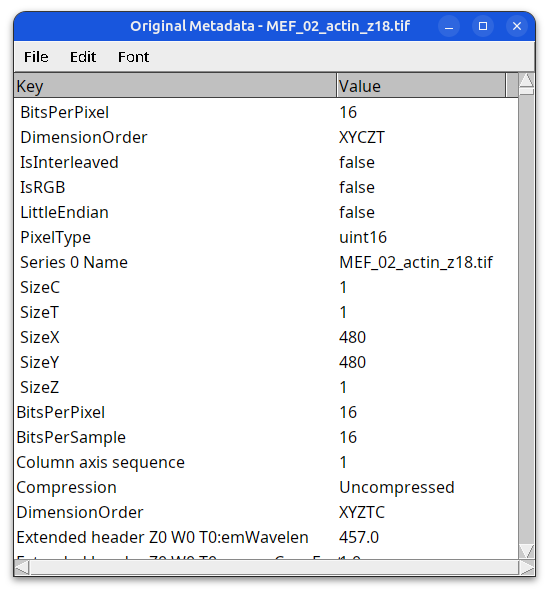

Image Metadata

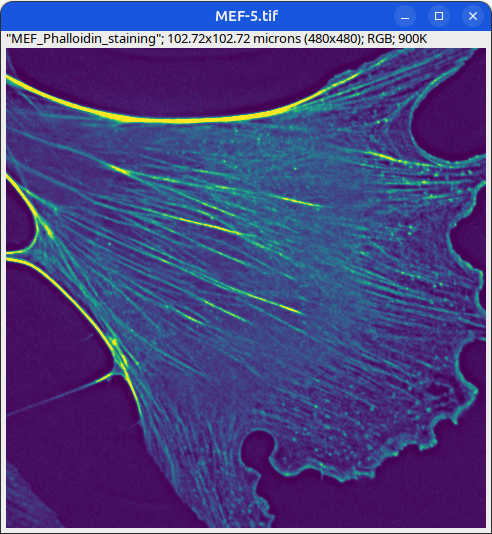

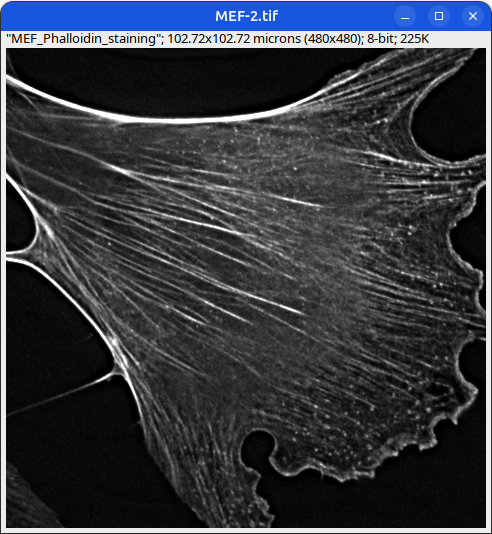

Some metadata is displayed under the image title.

Mouse embryonic fibroblast stained with Phalloidin

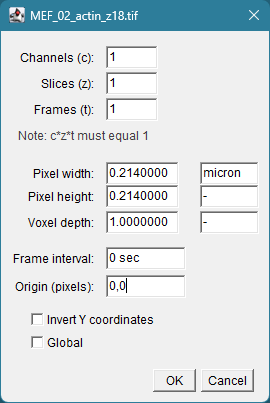

A bit more metadata could be found under:

Image > Properties

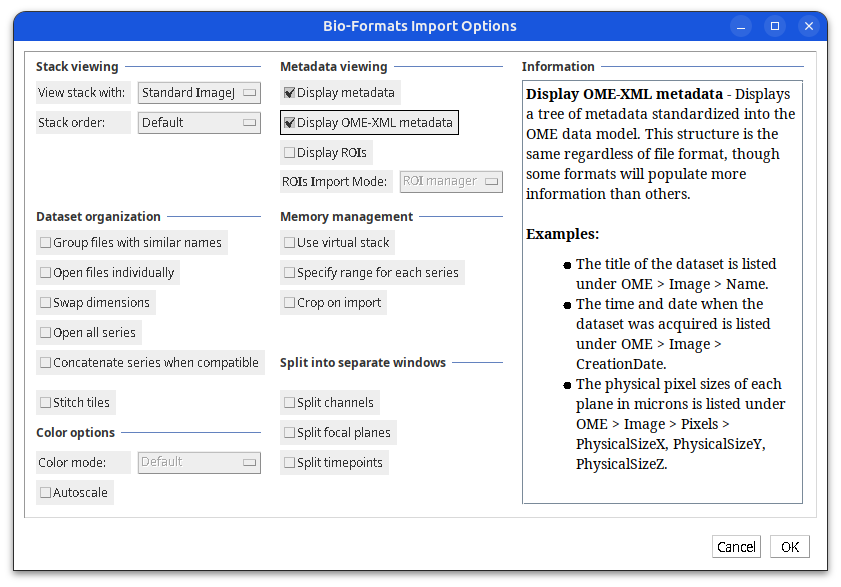

Image Metadata (detailed)

Plugins > Bio-Formats > Bio-Formats Importer

Image Metadata (detailed)

metadata as key/value pairs

Image Metadata (detailed)

metadata in OME-XML format

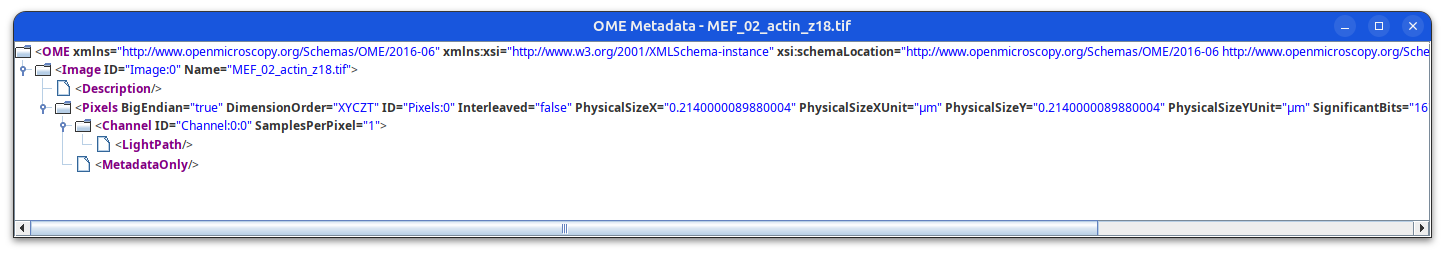

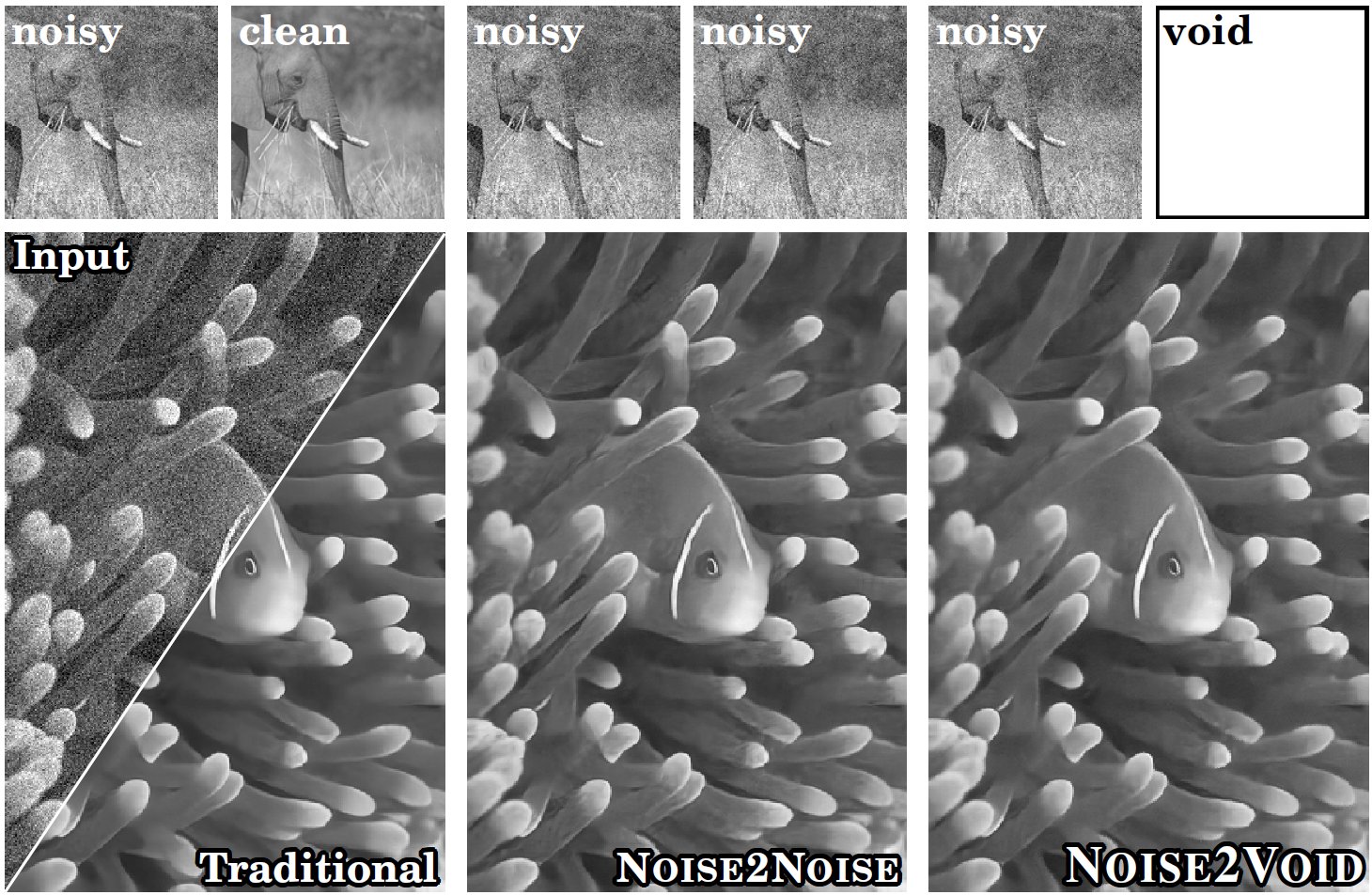

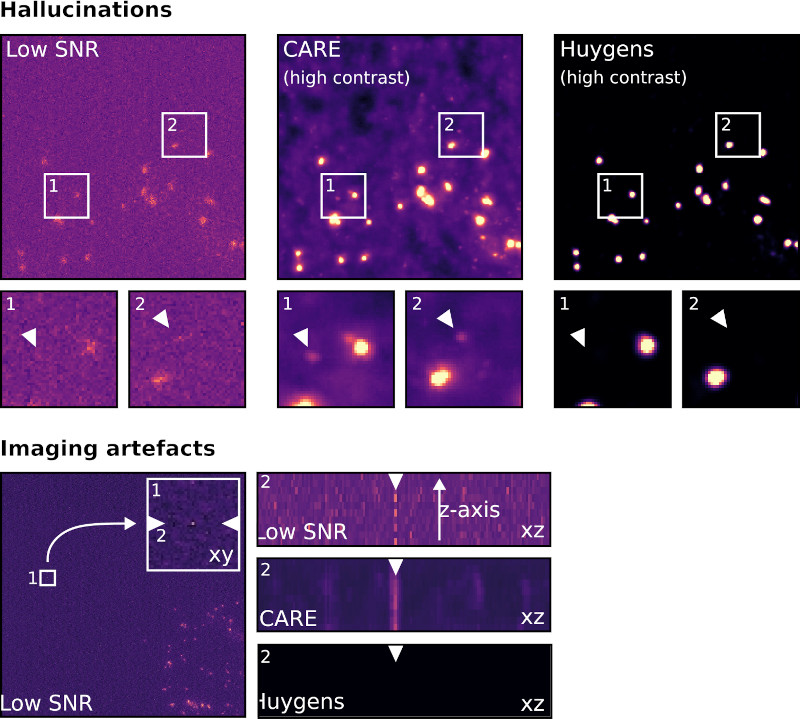

Noise reduction: AI-Denoising

- Various AI-denoising methods have been developed by training deep learning models on:

- pairs of noisy (low SNR) and clean (high SNR) images

- CARE (Content-Aware Image Restoration)

- RCAN (Residual Channel Attention Networks, from AIVIA)

- pairs of noisy images - Noise2Noise (N2N)

- Single noisy images - Noise2Void (N2V)

- pairs of noisy (low SNR) and clean (high SNR) images

- Problems with AI-denoising:

- AI can create, alter, or hallucinate features that do not exist in the raw data, producing images that look realistic but are scientifically inaccurate.

- Resource-intensive: model training requires hundreds to thousands of paired (noisy/clean) images to train effectively

- Some methods such as Noise2Void fail to remove structured noise

concept of deconvolution https://zeiss-campus.magnet.fsu.edu/articles/basics/psf.html

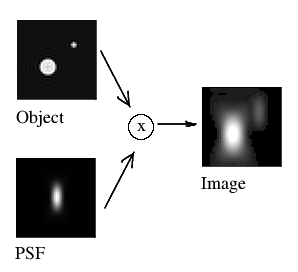

Noise reduction: Deconvolution

- A mathematical operation that reverses the effects of convolution (blurring due to out of focus light) in an image, using the point spread function (PSF) of the imaging system.

- Does not require deep learning model training, so it is less computationally-intensive than AI-denoising

- It does require accurate estimation of the PSF for optimal results.

- Deconvolution can improve image resolution and contrast without introducing hallucinations, as it is based on the physical properties of the imaging system rather than learned patterns from data.

- Overall, Deconvolution is a more reliable method for noise reduction in microscopy images compared to AI-denoising.

Resource

https://svi.nl/AI-Image-Denoising

Acknowledgements

- Alison North

- Tom Carroll

- James Hudspeth

- Tao Tong

- Ivan Rey Suarez

- Behzad Khajavi

- Maria Belen Harreguy Alfonso

- Priyam Banerjee

Our wonderful users and collaborators!

Fundamentals of Microscopy workshop 2026 - Bio-Imaging Resource Center, The Rockefeller University